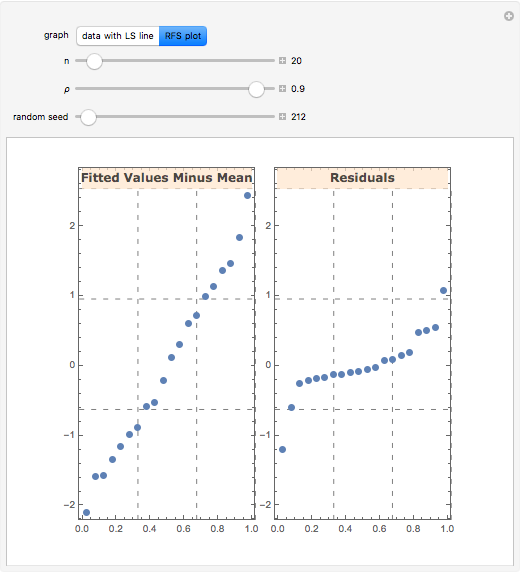

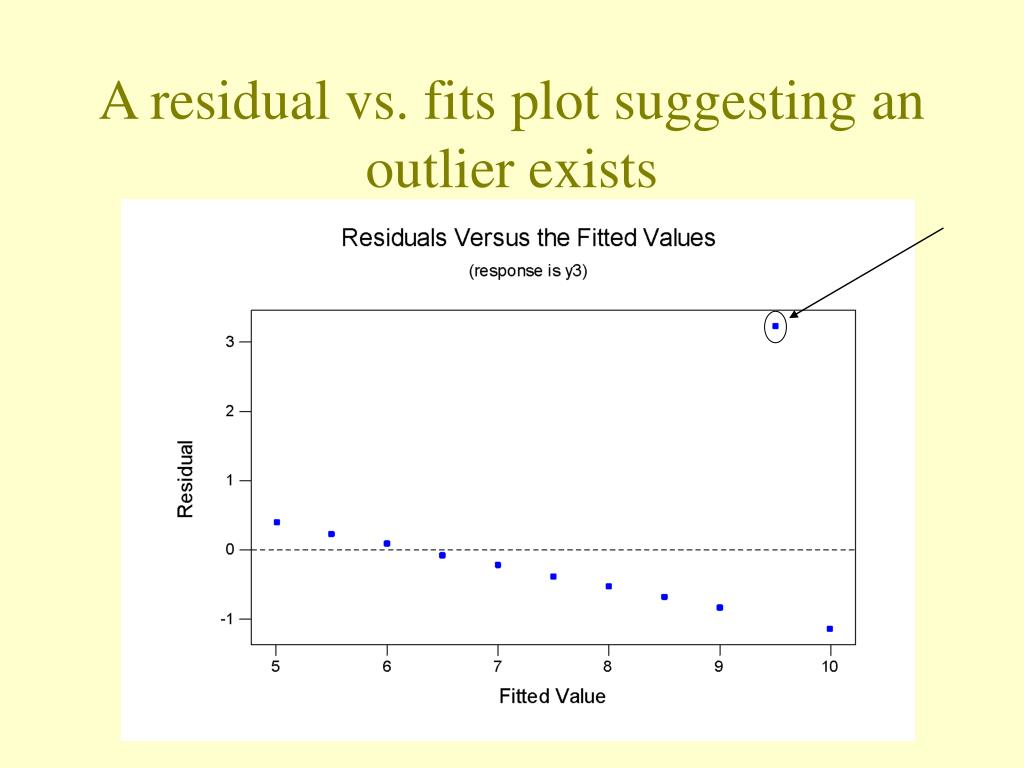

Suppose that the linear model (39) is correct. (71) e ˆ L S = e − H c e + ( I − H c ) Z c γ. Additional methods are provided in Chapter 8. In regression, this can be accomplished by examining the residuals. Of special importance are the assumptions of proper model specification, homogeneous variance, and lack of outliers.

The square of the correlation coefficient is used to describe the effectiveness of a linear regression.Īs for most statistical analyses, it is important to verify that the assumptions underlying the model are fulfilled. This measure is also useful when there is no independent/ dependent variable relationship. The correlation coefficient is a measure of the strength of a linear relationship between two variables. Inferences on the response variable include confidence intervals for the conditional mean as well as prediction intervals for a single observation. This partitioning is also used for the test of the null hypothesis that the regression relationship does not exist.Īn alternate and equivalent test for the hypothesis β 1 = 0 is provided by a t statistic, which can be used for one-tailed tests and to test for any specified value of β 1 and to construct a confidence interval. This quantity is defined as the variance of the residuals from the regression but is computed from a partitioning of sums of squares. The next step is to estimate the variance of the random error. The first step in a regression analysis is to use n pairs of observed x and y values to obtain least squares estimates of the model parameters β 0 and β 1. The model specifies that y is a random variable with a mean that is linearly related to x and has a variance specified by the random variable ε. Is used as the basis for establishing the nature of a relationship between values of an independent or predictor variable, x, and values of a dependent or response variable, y.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed